11 Platforms. 8 Criteria.

One Clear Picture.

A music studio needed to choose a practice management platform. The market was crowded with competing claims and no clear winner. This is how we cut through it.

The Problem

A local music studio owner was ready to adopt a practice management platform — but didn't know where to start. The market had fragmented over the past few years, with a growing number of AI-powered tools making overlapping claims about engagement, feedback quality, and teacher efficiency.

She knew what she needed. She didn't know which platforms actually delivered it.

Her initial ask was straightforward: fill in a Google Sheet grid she'd put together with findings on each platform. It was a reasonable starting point. We replaced it with something better.

The Brief

8 criteria. All defined by the studio owner before research began.

The Process

Research began cold — no assumed winners, no vendor bias. Eleven platforms were evaluated entirely from public-facing information: marketing websites, product documentation, demo videos, help articles, and free trials where available.

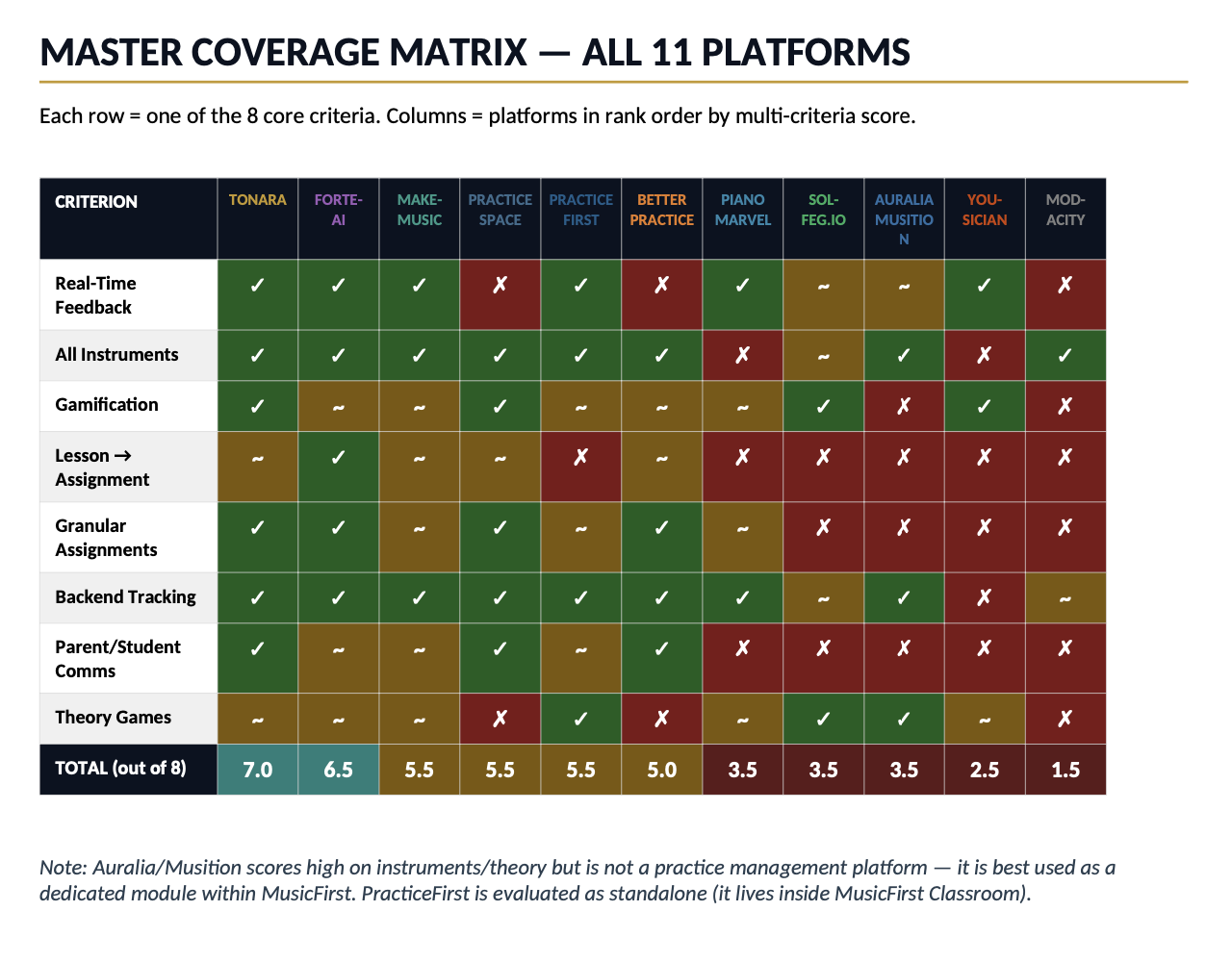

Each platform was scored against all 8 criteria using a consistent three-tier scale: full support, partial support, or not supported. Platforms that didn't meet a baseline multi-instrument threshold were excluded from the main matrix but documented separately with estimated scores for reference.

The entire research and writing process was completed in a matter of days.

What We Found

"The most striking finding wasn't which platform won — it was how few platforms scored well across the full set of criteria."

The market is fragmented in a predictable way: consumer-facing apps optimize for engagement but lack teacher tools; institutional platforms have strong backends but weak gamification; newer AI-first tools are promising but unproven at scale. Only two platforms cleared 6.5 out of 8 criteria.

The Deliverable

The final report covered all 11 platforms across the full 8-criteria matrix, with individual deep-dive profiles for each scored platform, a separate section for excluded platforms with estimated scores, key observations synthesizing the most important findings, and vendor-reported claims called out separately from independently observable features.

Report cover and framing

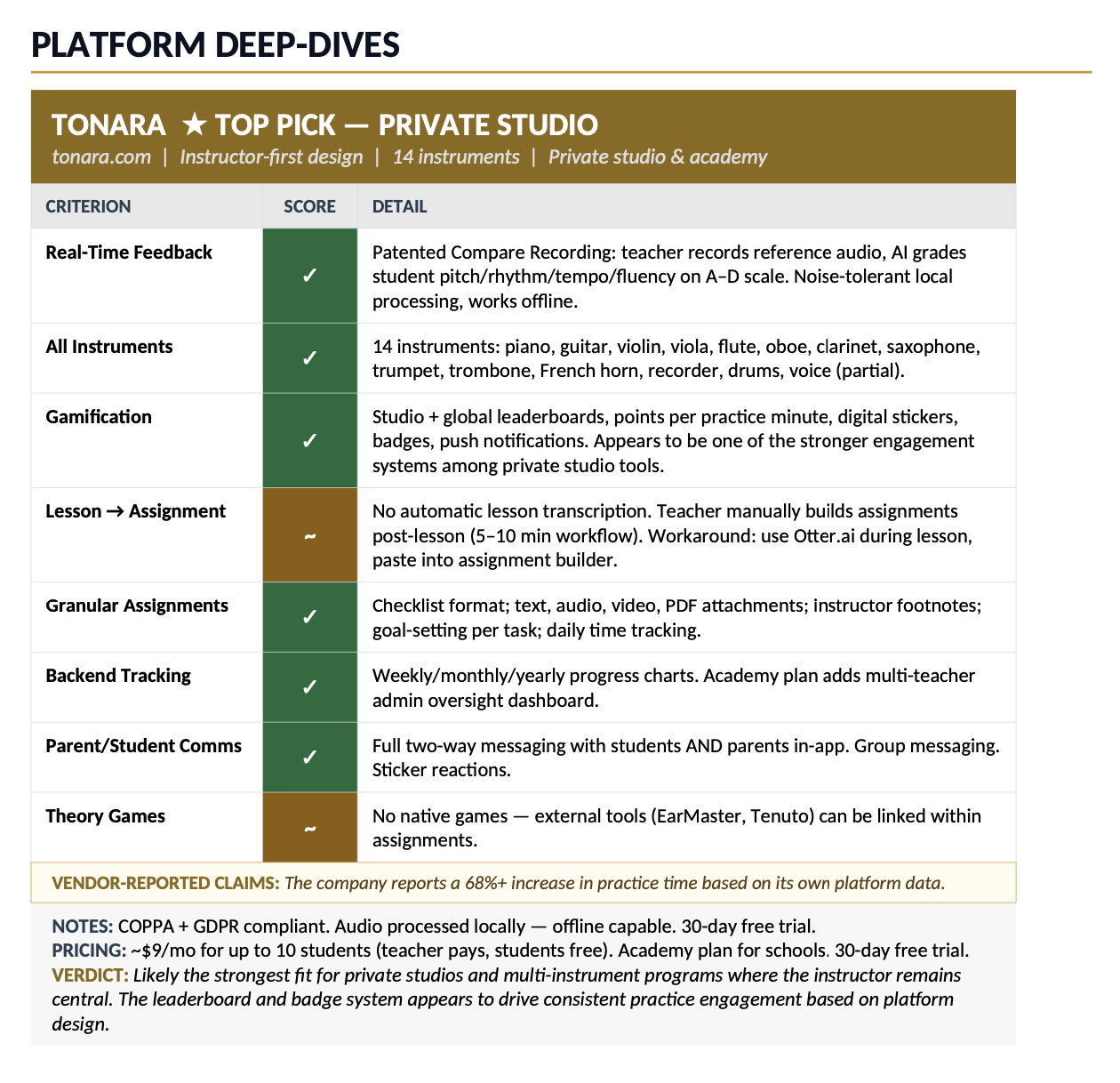

Platform deep-dive profile — Tonara

The studio owner had originally provided a Google Sheet template to populate. The report replaced it entirely — the coverage matrix proved faster to scan than a spreadsheet grid, and the deep-dive profiles delivered significantly more decision-relevant detail than a cell entry ever could.

Her reaction: impressed by both the depth and the design.

Where Things Stand

The studio is still in the evaluation phase. The report narrowed the field and clarified the decision — but some of the more granular questions the owner has will require direct vendor contact to answer. That's a normal and healthy next step: the report did its job by getting her to the right three platforms instead of starting from eleven.

The engagement also revealed a gap in the market worth watching: no platform currently delivers a true lesson-transcription workflow. The platform that solves that problem cleanly will have a significant advantage.